High ROI of Efficient Product Research

Many product teams decide to forgo UX research based on fear or experience that research would slow their work down, and / or is overly expensive. These objections are valid, and so in this paper I will address them and how efficient, well-designed UX research can deliver insights fast and have a high return on investment

Assumption 1 : "We Already Know Our Users"

Most teams know their users: they've been studying them, building for them. That familiarity is real, but that familiarity isn't always the same as accuracy.

Familiarity creates confidence. Confidence creates blind spots. The longer a team has been building for a specific persona, the more invisible the assumptions underneath that persona become. Teams stop asking who the user is and start assuming they already know.

This is how entire segments of users go undetected — not because the team was careless, but because the research that would have surfaced them was never done.

Case Study - Online Grocery Shopping

The product team had built everything around a single persona: the 'Busy Mom.' They knew her well. They'd mapped her journey, optimized her checkout flow, and built substitution logic around her shopping habits.

What they hadn't included: one-third of delivery customers were 'Housebound Shoppers' — individuals with physical disabilities, neurodiversity, or unreliable transportation who depended on delivery in ways the Busy Mom never did.Unlike pickup customers who could reject incorrect substitutions in person, Housebound Shoppers had no recourse. When the system replaced a dietary-restricted item with an item they couldn't use, they were forced to absorb the cost. The result: elevated churn, negative word-of-mouth, and refund losses — all from a segment the product team didn't know existed.

Mixed-methods research (surveys + in-depth interviews) identified this segment, leading to a persona expansion and a corrected substitution-and-recovery flow that stabilized retention.

Case Study - Boardgame Finder - Not All Gamers are Nerds

Assumption 2: "We Know What Our Users Want"

Users will tell you the features they'd like, the problems that frustrate them, the improvements they'd request. They're far less reliable reporters of what would actually change their behavior.

This gap — between stated preference and actual need — is where failed products are born. A team can run a survey, collect 500 responses, and still build the wrong thing, because users describe wants while good research surfaces needs.

The two are related, but they're not the same. A user who says they want 'a faster checkout' might just need fewer decisions. Responding to stated wants without understanding underlying needs means solving the symptom, not the problem.

Case Study - Enterprise Analytics Platform

The new platform was objectively more powerful than the legacy system it was designed to replace. Faster processing, more features, cleaner architecture. But many small business users described it as 'overwhelming, confusing, frustrating, and slow' — and refused to transition.

The team's instinct was to force this segment to the new platform and hope that they would appreciate the extra features. Research showed something different: most users didn't need more features. They needed more control and a dashboard less cluttered with information. The research changed the direction of the development to address the needs of both user segments

The strategic redesign informed by these findings increased platform adoption by 30% and delivered $1M+ in annual savings.

Assumption 3: "We Have No Time for Research. We're Moving Fast."

Time is a real constraint. The question is what efficient looks like when you've built the wrong thing.

Fixing a defect after release costs 30 times more than catching it during the design phase (IBM). Every week of 'saved' research time compounds into weeks of rework — plus launch costs, user churn, reputational loss, support volume, and the opportunity cost of work your team wasn't doing instead.

The assumption that research takes weeks is outdated. The sprint model below delivers actionable insights in 5 to 8 days.

Case Study - Social Media Innovation Lab's Unwanted App

Not every bold idea deserves to be built. When an international social media company's Innovation Lab tested a slate of unconventional app concepts, diary studies with potential users made one thing clear: some weren't worth pursuing. That finding alone saved $110,000 and freed five engineers for two solid months — time that went toward shipping real product.

That's not research as overhead. That's research as capital protection.

The Research - Design Sprint

Good UX can structure engagements as 5-to-8-day sprints— a model refined during my time as Senior Rapid Researcher at Google, where this approach was field-tested.

The sprint may run from stakeholder alignment on Day 1 through data collection (e.g., user interviews), AI-assisted synthesis, rapid prototyping, and usability testing, to actionable deliverables on Day 8. Whether the question is generative — exploring a problem space, surfacing unmet needs, or building foundational user understanding — or evaluative — testing a prototype, validating a redesign, or diagnosing adoption barriers — the sprint adapts to fit it.

AI-assisted analysis accelerates synthesis by 30–40%, compressing the discovery cycle without sacrificing depth.

Human data collection and expert interpretation remain central: pattern detection is automated; insight is not.

Organizations that invest in research during the concept phase reduce their development cycles by 33–50%(AnswerLab/Interaction Design Foundation). With research done right, product development isn’t slower than shipping blind. It's faster.

Assumption 4: "Our Users Have No Disabilities"

This is the assumption I heard the most often. In reality, well over a billion people today experience some form of permanent disability (according to WHO, 1.5 billion people suffer just from hearing loss) and anyone can experience temporary (broken arm) and situational (being in a noisy environment) disability.

Disability status is one of the most neglected variables in user research. Users with physical disabilities, neurodiversity, low vision, hearing loss, and mobility constraints frequently go unrepresented in standard panels — not because they aren't using products, but because they're seldom specifically recruited.

Of course, this leads to products that work for the users you tested and fail the users you excluded.

Case Study - Online Grocery - One Third of the Customer Base

Returning to the Housebound Shopper finding: one-third of grocery delivery customers in this engagement had either physical disabilities, neurodiversity, or mobility constraints. These customers are not edge cases, neither is 33% a rounding error.

This segment was invisible in every existing persona, absent from the journey maps, and profoundly underserved by the product's substitution and recovery logic. Customers couldn't refuse incorrect items at the door. They were not able to drive to the store to return the unwanted items. They had to absorb the cost of every wrong substitution— until they stopped using the service.

Inclusive research surfaced this segment. The business impact of fixing it extended well beyond accessibility compliance.

Case Study - AI Translation App for Deaf and Hard-of-Hearing Users

At Microsoft, I led RITE (Rapid Iterative Testing and Evaluation) research on an AI-based translation and transcription app, testing with able-bodied, neurodiverse and deaf/hard-of-hearing users.

The needs of each group were different in ways that were entirely invisible without direct observation. Interfaces that worked cleanly for hearing users introduced friction at critical points for non-hearing users — and vice versa. This wasn't a minor UX detail. It was a core product decision that required all three groups to be included in usability research

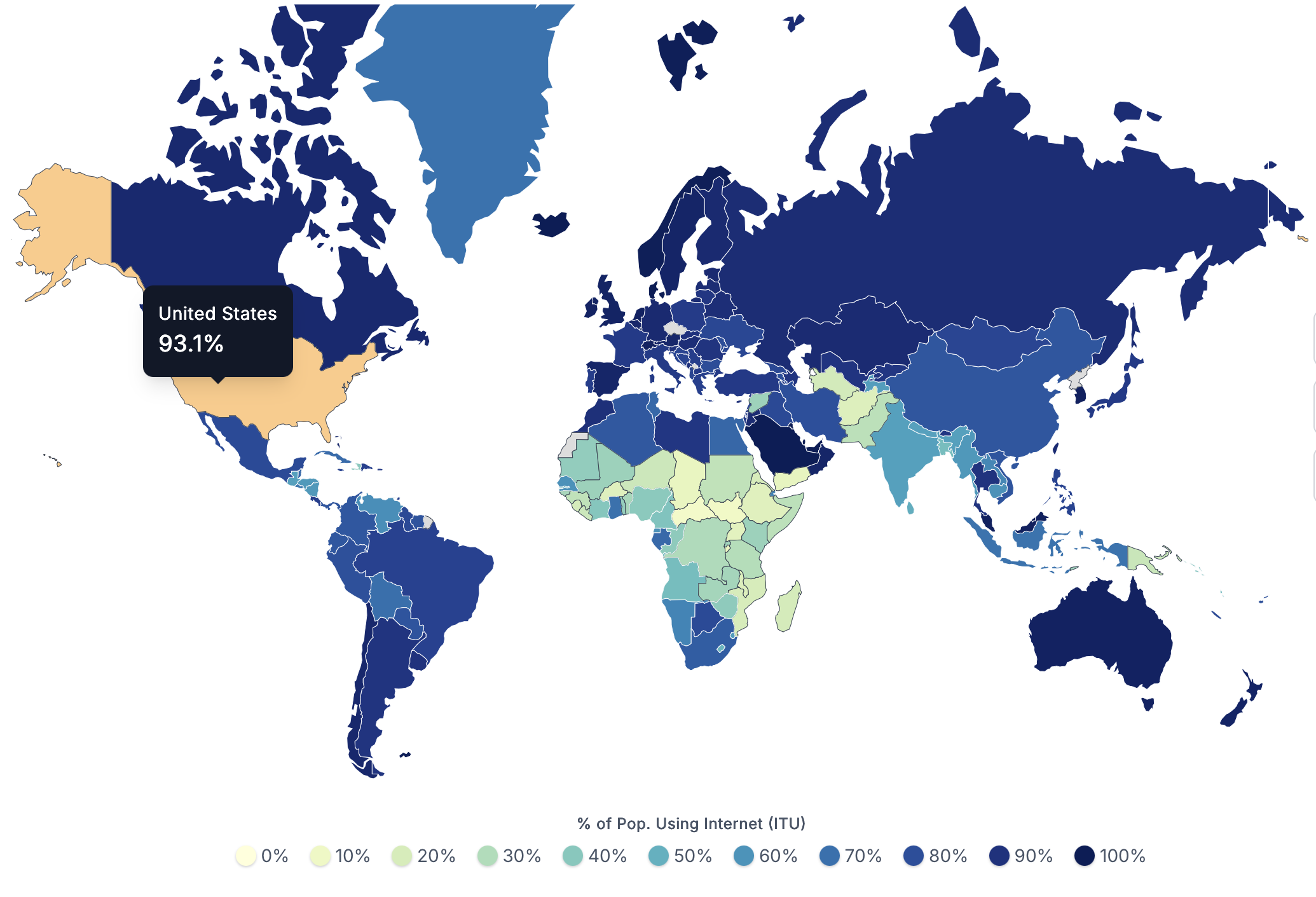

93.1% of People in the US are Internet-Users

Aenean laoreet ipsum nec mi pharetra, sed euismod ante interdum. Curabitur non quam eget sapien condimentum scelerisque eu vitae nulla. Suspendisse ac turpis est. Maecenas consequat rutrum leo ac consectetur.

Lorem ipsum dolor sit amet, consectetur?

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Fusce commodo, lectus ut suscipit euismod, quam sapien aliquet sapien, in bibendum sem est nec sem. Phasellus felis turpis,

Fusce commodo lectus ut suscipit euismod quam sapien?

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Fusce commodo, lectus ut suscipit euismod, quam sapien aliquet sapien, in bibendum sem est nec sem. Phasellus felis turpis,

Aliquet sapien in bibendum sem est nec sem?

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Fusce commodo, lectus ut suscipit euismod, quam sapien aliquet sapien, in bibendum sem est nec sem. Phasellus felis turpis,

Fusce commodo lectus ut suscipit euismod quam sapien?

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Fusce commodo, lectus ut suscipit euismod, quam sapien aliquet sapien, in bibendum sem est nec sem. Phasellus felis turpis,

Consectetur adipiscing elit Fusce commodo ut susc?

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Fusce commodo, lectus ut suscipit euismod, quam sapien aliquet sapien, in bibendum sem est nec sem. Phasellus felis turpis,

Lorem ipsum dolor sit amet, consectetur?

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Fusce commodo, lectus ut suscipit euismod, quam sapien aliquet sapien, in bibendum sem est nec sem. Phasellus felis turpis,

We're Ready to Dive into Research and Help your Project Succeed!

Feel free to schedule a call with me to talk through your research needs

Agnes@good-ux.org